Veeam & Proxmox VE

Veeam has made a strategic move by integrating the open-source virtualization solution Proxmox VE (Virtual Environment) into its portfolio. Signaling its commitment into the evolving needs of the open-source community and the open-source virtualization market, this integration positions Veeam as a forward-thinking player in the industry, ready to support the rising tide of open-source solutions. The combination of Veeam’s data protection solutions with the flexibility of Proxmox VE’s platform offers enterprises a compelling alternative that promises cost savings and enhanced data security.

With the Proxmox VE, now also one of the most important and often requested open-source solution and hypervisor is being natively supported – and it could definitely make a turn in the virtualization market!

Opportunities for Open-Source Virtualization

In many enterprises, a major hypervisor platform is already in place, accompanied by a robust backup solution – often Veeam. However, until recently, Veeam lacked direct support for Proxmox VE, leaving a gap for those who have embraced or are considering this open-source virtualization platform. The latest version of Veeam changes the game by introducing the capability to create and manage backups and restores directly within Proxmox VE environments, without the need for agents inside the VMs.

This advancement means that entire VMs can now be backed up and restored across any hypervisor, providing unparalleled flexibility. Moreover, enterprises can seamlessly integrate a new Proxmox VE-based cluster into their existing Veeam setup, managing everything from a single, central point. This integration simplifies operations, reduces complexity, and enhances the overall efficiency of data protection strategies in environments that include multiple hypervisors by simply having a one-fits-all solution in place.

Also, an heavily underestimated benefit, offers the possibilities to easily migrate, copy, backup and restore entire VMs even independent of their underlying hypervisor – also known as cross platform recovery. As a result, operators are now able to shift VMs from VMware ESXi nodes / vSphere, or Hyper-V to Proxmox VE nodes. This provides a great solution to introduce and evaluate a new virtualization platform without taking any risks. For organizations looking to unify their virtualization and backup infrastructure, this update offers a significant leap forward.

Integration into Veeam

Integrating a new Proxmox cluster into an existing Veeam setup is a testament to the simplicity and user-centric design of both systems. Those familiar with Veeam will find the process to be intuitive and minimally disruptive, allowing for a seamless extension of their virtualization environment. This ease of integration means that your new Proxmox VE cluster can be swiftly brought under the protective umbrella of Veeam’s robust backup and replication services.

Despite the general ease of the process, it’s important to recognize that unique configurations and specific environments may present their own set of challenges. These corner cases, while not common, are worth noting as they can require special attention to ensure a smooth integration. Rest assured, however, that these are merely nuances in an otherwise straightforward procedure, and with a little extra care, even these can be managed effectively.

Overview

Starting with version 12.2, the Proxmox VE support is enabled and integrated by a plugin which gets installed on the Veeam Backup server. Veeam Backup for Proxmox incorporates a distributed architecture that necessitates the deployment of worker nodes. These nodes function analogously to data movers, facilitating the transfer of virtual machine payloads from the Proxmox VE hosts to the designated Backup Repository. The workers operate on a Linux platform and are seamlessly instantiated via the Veeam Backup Server console. Their role is critical and akin to that of proxy components in analogous systems such as AHV or VMware backup solutions.

Such a worker is needed at least once in a cluster. For improved performance, one worker for each Proxmox VE node might be considered. Each worker requires 6 vCPU, 6 GB memory and 100 GB disk space which should be kept in mind.

Requirements

This blog post assumes that an already present installation of Veeam Backup & Replication in version 12.2 or later is already in place and fully configured for another environment such like VMware. It also assumes that the Proxmox VE cluster is already present and a credential with the needed roles to perform the backup/restore actions is given.

Configuration

The integration and configuration of a Proxmox VE cluster can be fully done within the Veeam Backup & Replication Console application and does not require any additional commands on any cli to be executed. The previously mentioned worker nodes can be installed fully automated.

Adding a Proxmox Server

To integrate a new Proxmox Server into the Veeam Backup & Replication environment, one must initiate the process by accessing the Veeam console. Subsequently, navigate through the designated sections to complete the addition:

To integrate a new Proxmox Server into the Veeam Backup & Replication environment, one must initiate the process by accessing the Veeam console. Subsequently, navigate through the designated sections to complete the addition:

Virtual Infrastructure -> Add Server

This procedure is consistent with the established protocol for incorporating nodes from other virtualization platforms that are compatible with Veeam.

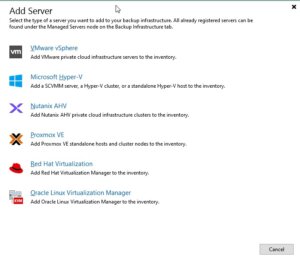

Afterwards, Veeam shows you a selection of possible and supported Hypervisors:

- VM vSphere

- Microsoft Hyper-V

- Nutanix AHV

- RedHat Virtualization

- Oracle Virtualization Manager

- Proxmox VE

In this case we simply choose Proxmox VE and proceed the setup wizard.

During the next steps in the setup wizard, the authentication details, the hostname or IP address of the target Proxmox VE server and also a snapshot storage of the Proxmox VE server must be defined.

Hint: When it comes to the authentication details, take care to use functional credentials for the SSH service on the Proxmox VE server. If you usually use the root@pam credentials for the web interface, you simply need to prompt root to Veeam. Veeam will initiate a connection to the system over the ssh protocol.

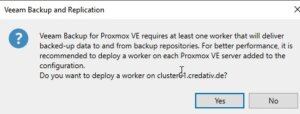

In one of the last surveys of the setup wizard, Veeam offers to automatically install the required worker node. Such a worker node is a small sized VM that is running inside the cluster on the targeted Proxmox VE server. In general, a single worker node for a cluster in enough but to enhance the overall performance, one worker for each node is recommended.

In one of the last surveys of the setup wizard, Veeam offers to automatically install the required worker node. Such a worker node is a small sized VM that is running inside the cluster on the targeted Proxmox VE server. In general, a single worker node for a cluster in enough but to enhance the overall performance, one worker for each node is recommended.

Usage

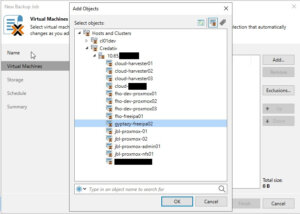

Once the Proxmox VE server has been successfully integrated into the Veeam inventory, it can be managed as effortlessly as any other supported hypervisor, such as VMware vSphere or Microsoft Hyper-V. A significant advantage, as shown in the screenshot, is the capability to centrally administrate various hypervisors and servers in clusters. This eliminates the necessity for a separate Veeam instance for each cluster, streamlining operations. Nonetheless, there may be specific scenarios where individual setups for each cluster are preferable.

Once the Proxmox VE server has been successfully integrated into the Veeam inventory, it can be managed as effortlessly as any other supported hypervisor, such as VMware vSphere or Microsoft Hyper-V. A significant advantage, as shown in the screenshot, is the capability to centrally administrate various hypervisors and servers in clusters. This eliminates the necessity for a separate Veeam instance for each cluster, streamlining operations. Nonetheless, there may be specific scenarios where individual setups for each cluster are preferable.

As a result, this does not only simplify the operator’s work when working with different servers and clusters but also provides finally the opportunity for cross-hypervisor-recoveries.

Creating Backup Jobs

Creating a new backup job for a single VM or even multiple VMs in a Proxmox environment is as simple and exactly the same way, like you already know for other hypervisors. However, let us have a quick summary about the needed tasks:

Open the Veeam Backup & Replication console on your backup server or management workstation. To start creating a backup job, navigate to the Home tab and click on Backup Job, then select Virtual machine from the drop-down menu.

When the New Backup Job wizard opens, you will need to enter a name and a description for the backup job. Click Next to proceed to the next step. Now, you will need to select the VMs that you want to back up. Click Add in the Virtual Machines step and choose the individual VMs or containers like folders, clusters, or entire hosts that you want to include in the backup. Once you have made your selection, click Next.

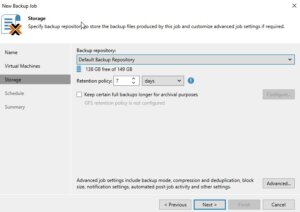

The next step is to specify where you want to store the backup files. In the Storage step, select the backup repository and decide on the retention policy that dictates how long you want to keep the backup data. After setting this up, click Next.

The next step is to specify where you want to store the backup files. In the Storage step, select the backup repository and decide on the retention policy that dictates how long you want to keep the backup data. After setting this up, click Next.

If you have configured multiple backup proxies, the next step allows you to specify which one to use. If you are not sure or if you prefer, you can let Veeam Backup & Replication automatically select the best proxy for the job. Click Next after making your choice.

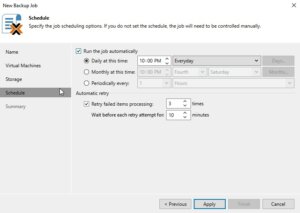

Now it is time to schedule when the backup job should run. In the Schedule step, you can set up the job to run automatically at specific times or in response to certain events. After configuring the schedule, click Next.

Now it is time to schedule when the backup job should run. In the Schedule step, you can set up the job to run automatically at specific times or in response to certain events. After configuring the schedule, click Next.

Review all the settings on the summary page to ensure they are correct. If everything looks good, click Finish to create the backup job.

If you want to run the backup job immediately for ensuring everything works as expected, you can do so by right-clicking on the job and selecting Start. Alternatively, you can wait for the scheduled time to trigger the job automatically.

Restoring an entire VM

The restore and replication process for a full VM restore remains to the standard procedures. However, it now includes the significant feature of cross-hypervisor restore. This functionality allows for the migration of VMs between different hypervisor types without compatibility issues. For example, when introducing Proxmox VE into a corporate setting, operators can effortlessly migrate VMs from an existing hypervisor to the Proxmox VE cluster. Should any issues arise during the testing phase, the process also supports the reverse migration back to the original hypervisor. Let us have a look at the details.

Open the Veeam Backup & Replication console on your backup server or management workstation. To start creating a backup job, navigate to the Home tab and click on Backup Job, then select Virtual machine from the Disk menu.

Choose the Entire VM restore option, which will launch the wizard for restoring a full virtual machine. The first step in the wizard will ask you to select a backup from which you want to restore. You will see a list of available backups; select the one that contains the VM you wish to restore and proceed to the next step by clicking Next.

Now, you must decide on the restore point. Typically, this will be the most recent backup, but you may choose an earlier point if necessary. After selecting the restore point, continue to the next step.

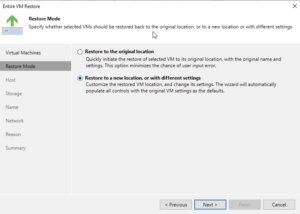

The wizard will then prompt you to specify the destination for the VM. This is the very handy point for cross-hypervisor-restore where this could be the original location or a new location if you are performing a migration or don’t want to overwrite the existing VM. Configure the network settings as required, ensuring that the restored VM will have the appropriate network access.

The wizard will then prompt you to specify the destination for the VM. This is the very handy point for cross-hypervisor-restore where this could be the original location or a new location if you are performing a migration or don’t want to overwrite the existing VM. Configure the network settings as required, ensuring that the restored VM will have the appropriate network access.

In the next step, you will have options regarding the power state of the VM after the restoration. You can choose to power on the VM automatically or leave it turned off, depending on your needs.

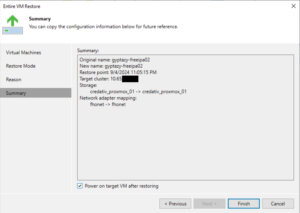

Before finalizing the restore process, review all the settings to make sure they align with your intended outcome. This is your chance to go back and make any necessary adjustments. Once you’re satisfied with the configuration, proceed to restore the VM by clicking Finish.

Before finalizing the restore process, review all the settings to make sure they align with your intended outcome. This is your chance to go back and make any necessary adjustments. Once you’re satisfied with the configuration, proceed to restore the VM by clicking Finish.

The restoration process will begin, and its progress can be monitored within the Veeam Backup & Replication console. Depending on the size of the VM and the performance of your backup storage and network, the restoration can take some time.

File-Level-Restore

Open the Veeam Backup & Replication console on your backup server or management workstation. To start creating a backup job, navigate to the Home tab and click on Backup Job, then select Virtual machine from the Disk menu.

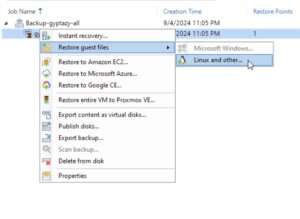

Select Restore guest files. The wizard for file-level recovery will start, guiding you through the necessary steps. The first step involves choosing the VM backup from which you want to restore files. Browse through the list of available backups, select the appropriate one, and then click Next to proceed.

Select Restore guest files. The wizard for file-level recovery will start, guiding you through the necessary steps. The first step involves choosing the VM backup from which you want to restore files. Browse through the list of available backups, select the appropriate one, and then click Next to proceed.

Choose the restore point that you want to use for the file-level restore. This is typically the most recent backup, but you can select an earlier one if needed. After picking the restore point, click Next to continue.

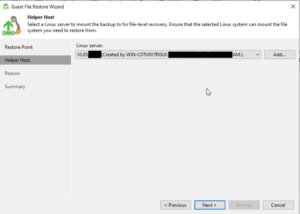

At this stage, you may need to choose the operating system of the VM that you are restoring files from. This is particularly important if the backup is of a different OS than the one on the Veeam Backup & Replication server because it will determine the type of helper appliance required for the restore.

Veeam Backup & Replication will prompt you to deploy a helper appliance if the backup is from an OS that is not natively supported by the Windows-based Veeam Backup & Replication server. Follow the on-screen instructions to deploy the helper appliance, which will facilitate the file-level restore process.

Veeam Backup & Replication will prompt you to deploy a helper appliance if the backup is from an OS that is not natively supported by the Windows-based Veeam Backup & Replication server. Follow the on-screen instructions to deploy the helper appliance, which will facilitate the file-level restore process.

Once the helper appliance is ready, you will be able to browse the file system of the backup. Navigate through the backup to locate the files or folders you wish to restore.

After selecting the files or folders for restoration, you will be prompted to choose the destination where you want to restore the data. You can restore to the original location or specify a new location, depending on your requirements.

Review your selections to confirm that the correct files are being restored and to the right destination. If everything is in order, proceed with the restoration by clicking Finish.

Review your selections to confirm that the correct files are being restored and to the right destination. If everything is in order, proceed with the restoration by clicking Finish.

The file-level restore process will start, and you can monitor the progress within the Veeam Backup & Replication console. The time it takes to complete the restore will depend on the size and number of files being restored, as well as the performance of your backup storage and network.

Conclusion

Summarising all the things, the latest update to Veeam introduces a very important and welcomed integration with Proxmox VE, filling a significant gap for enterprises that have adopted this open-source virtualization platform. By enabling direct backups and restores of entire VMs across different hypervisors without the need for in-VM agents, Veeam now offers unparalleled flexibility and simplicity in managing mixed environments. This advancement not only streamlines operations and enhances data protection strategies but also empowers organizations to easily migrate and evaluate new open-source virtualization platforms like Proxmox VE with minimal risk. It is great to see that more and more companies are putting efforts into supporting open-source solutions which underlines the ongoing importance of open-source based products in enterprises.

Additionally, for those starting fresh with Proxmox, the Proxmox Backup Server remains a viable open-source alternative and you can find our blog post about configuring the Proxmox Backup Server right here. Overall, this update represents a significant step forward in unifying virtualization and backup infrastructures, offering both versatility and ease of integration.

We are always here to help and assist you with further consulting, planning, and integration needs. Whether you are exploring new virtualization platforms, optimizing your current infrastructure, or looking for expert guidance on your backup strategies, our team is dedicated to ensuring your success every step of the way. Do not hesitate to reach out to us for personalized support and tailored solutions to meet your unique requirements in virtualization- or backup environments.

In the world of virtualization, ensuring data redundancy and high availability is crucial. Proxmox Virtual Environment (PVE) is a powerful open-source platform for enterprise virtualization, combining KVM hypervisor and LXC containers. One of the key features that Proxmox offers is local storage replication, which helps in maintaining data integrity and availability in case of hardware failures. In this blog post, we will delve into the concept of local storage replication in Proxmox, its benefits, and how to set it up.

What is Local Storage Replication?

Local storage replication in Proxmox refers to the process of duplicating data from one local storage device to another within the same Proxmox cluster. This ensures that if one storage device fails, the data is still available on another device, thereby minimizing downtime and data loss. This is particularly useful in environments where high availability is critical.

Benefits

- Data Redundancy: By replicating data across multiple storage devices, you ensure that a copy of your data is always available, even if one device fails.

- High Availability: In the event of hardware failure, the system can quickly switch to the replicated data, ensuring minimal disruption to services.

Caveat

Please note that data loss may occur between the last synchronization of the data and the failure of the node. Otherwise use shared storage (Ceph, NFS, …) in a cluster if you can not tolerate any small data loss.

Setting Up Local Storage Replication in Proxmox

Setting up local storage replication in Proxmox involves a few steps. Here’s a step-by-step guide to help you get started:

Step 1: Prepare Your Environment

Ensure that you have a Proxmox cluster set up with at least two nodes. Each node should have local ZFS storage configured.

Step 2: Configure Storage Replication

- Access the Proxmox Web Interface: Log in to the Proxmox web interface.

- Navigate to Datacenter: In the left-hand menu, click on Datacenter.

- Select Storage: Under the Datacenter menu, click on Storage.

- Add Storage: Click on Add and select the type of storage you want to replicate.

- Configure Storage: Fill in the required details for the ZFS storage (one local storage per node).

Step 3: Set Up Replication

- Navigate to the Node: In the left-hand menu, select the node where you want to set up replication.

- Select the VM/CT: Click on the virtual machine (VM) or container (CT) you want to replicate.

- Configure Replication: Go to the Replication tab and click on Add.

- Select Target Node: Choose the target node where the data will be replicated to.

- Schedule Replication: Set the replication schedule according to your needs (e.g. every 5 minutes, hourly).

Step 4: Monitor Replication

Once replication is set up, you can monitor its status in the Replication tab. Proxmox provides detailed logs and status updates to help you ensure that replication is functioning correctly.

Best Practices for Local Storage Replication

- Regular Backups: While replication provides redundancy, it is not a substitute for regular backups. Ensure that you have a robust backup strategy in place. Use tools like the Proxmox Backup Server (PBS) for this task.

- Monitor Storage Health: Regularly check the health of your storage devices to preemptively address any issues.

- Test Failover: Periodically test the failover process to ensure that your replication setup works as expected in case of an actual failure.

- Optimize Replication Schedule: Balance the replication frequency with your performance requirements and network bandwidth to avoid unnecessary load.

Conclusion

Local storage replication in Proxmox is a powerful feature that enhances data redundancy and high availability. By following the steps outlined in this blog post, you can set up and manage local storage replication in your Proxmox environment, ensuring that your data remains safe and accessible even in the face of hardware failures. Remember to follow best practices and regularly monitor your replication setup to maintain optimal performance and reliability.

You can find further information here about the Proxmox storage replication:

https://pve.proxmox.com/wiki/Storage_Replication https://pve.proxmox.com/pve-docs/chapter-pvesr.html

Happy virtualizing!

With version 256, systemd introduced run0. Lennart Poettering describes run0 as an alternative to sudo and explains on Mastodon at the same time what he sees as the problem with sudo.

In this blog post, however, we do not want to go into the strengths or weaknesses of sudo, but take a closer look at run0 and use it as a sudo alternative.

Unlike sudo, run0 uses neither the configuration file /etc/sudoers nor a SUID bit to extend user permissions. In the background, it uses systemd-run to start new processes, which has been in systemd for several years.

PolKit is used when it comes to checking whether a user has the appropriate permissions to use run0. All rules that the configuration of PolKit provides can be used here. In our example, we will concentrate on a simple variant.

Experimental Setup

For our example, we use an t2.micro EC2 instance with Debian Bookworm. Since run0 was only introduced in systemd version 256 and Debian Bookworm is still delivered with version 252 at the current time, we must first add the Debian Testing Repository.

❯ ssh admin@2a05:d014:ac8:7e00:c4f4:af36:3938:206e … admin@ip-172-31-15-135:~$ sudo su - root@ip-172-31-15-135:~# cat < /etc/apt/sources.list.d/testing.list > deb https://deb.debian.org/debian testing main > EOF root@ip-172-31-15-135:~# apt update Get:1 file:/etc/apt/mirrors/debian.list Mirrorlist [38 B] Get:5 file:/etc/apt/mirrors/debian-security.list Mirrorlist [47 B] Get:7 https://deb.debian.org/debian testing InRelease [169 kB] Get:2 https://cdn-aws.deb.debian.org/debian bookworm InRelease [151 kB] … Fetched 41.3 MB in 6s (6791 kB/s) Reading package lists... Done Building dependency tree... Done Reading state information... Done 299 packages can be upgraded. Run 'apt list --upgradable' to see them. root@ip-172-31-15-135:~# apt-cache policy systemd systemd: Installed: 252.17-1~deb12u1 Candidate: 256.1-2 Version table: 256.1-2 500 500 https://deb.debian.org/debian testing/main amd64 Packages 254.5-1~bpo12+3 100 100 mirror+file:/etc/apt/mirrors/debian.list bookworm-backports/main amd64 Packages 252.22-1~deb12u1 500 500 mirror+file:/etc/apt/mirrors/debian.list bookworm/main amd64 Packages *** 252.17-1~deb12u1 100 100 /var/lib/dpkg/status root@ip-172-31-15-135:~# apt-get install systemd … root@ip-172-31-15-135:~# dpkg -l | grep systemd ii libnss-resolve:amd64 256.1-2 amd64 nss module to resolve names via systemd-resolved ii libpam-systemd:amd64 256.1-2 amd64 system and service manager - PAM module ii libsystemd-shared:amd64 256.1-2 amd64 systemd shared private library ii libsystemd0:amd64 256.1-2 amd64 systemd utility library ii systemd 256.1-2 amd64 system and service manager ii systemd-cryptsetup 256.1-2 amd64 Provides cryptsetup, integritysetup and veritysetup utilities ii systemd-resolved 256.1-2 amd64 systemd DNS resolver ii systemd-sysv 256.1-2 amd64 system and service manager - SysV compatibility symlinks ii systemd-timesyncd 256.1-2 amd64 minimalistic service to synchronize local time with NTP servers root@ip-172-31-15-135:~# reboot …

The user admin is used for the initial login. This user has already been stored in the file /etc/sudoers.d/90-cloud-init-users by cloud-init and can therefore execute any sudo commands without being prompted for a password.

sudo cat /etc/sudoers.d/90-cloud-init-users # Created by cloud-init v. 22.4.2 on Thu, 27 Jun 2024 09:22:48 +0000 # User rules for admin admin ALL=(ALL) NOPASSWD:ALL

Analogous to sudo, we now want to enable run0 for the user admin.

Without further configuration, the user admin receives a login prompt asking for the root password. This is the default behavior of PolKit.

admin@ip-172-31-15-135:~$ run0 ==== AUTHENTICATING FOR org.freedesktop.systemd1.manage-units ==== Authentication is required to manage system services or other units. Authenticating as: Debian (admin) Password: Since this does not correspond to the behavior we want, we have to help a little in the form of a PolKit rule. Additional PolKit rules are stored under /etc/polkit-1/rules.d/.

root@ip-172-31-15-135:~# cat <

/etc/polkit-1/rules.d/99-run0.rules

polkit.addRule(function(action, subject) {

if (action.id = "org.freedesktop.systemd1.manage-units") {

if (subject.user === "admin") {

return polkit.Result.YES;

}

}

});

> EOF

The rule used is structured as follows: First, it is checked whether the action listed is org.freedesktop.systemd1.manage-units. If this is the case, it is checked whether the executing user is the user

Alternatively, it could also be checked whether the executing user belongs to a specific group, such as admin or sudo (if (subject.isInGroup("admin")). It would also be conceivable to ask the user for their own password instead of the root password.

The new rule is automatically read in by PolKit and can be used immediately. Via admin can now execute run0 analogously to our initial sudo configuration.

Process Structure

The following listing shows the difference in the call stack between sudo and run0 While in the case of sudo, separate child processes are started, run0 starts a new process via systemd-run.

root@ip-172-31-15-135:~# sudo su - root@ip-172-31-15-135:~# ps fo tty,ruser,ppid,pid,sess,cmd TT RUSER PPID PID SESS CMD pts/2 admin 1484 1514 1484 sudo su - pts/0 admin 1514 1515 1515 \_ sudo su - pts/0 root 1515 1516 1515 \_ su - pts/0 root 1516 1517 1515 \_ -bash pts/0 root 1517 1522 1515 \_ ps fo tty,ruser,ppid,pid,sess,cmd

admin@ip-172-31-15-135:~$ run0 root@ip-172-31-15-135:/home/admin# ps fo tty,ruser,ppid,pid,sess,cmd TT RUSER PPID PID SESS CMD pts/0 root 1 1562 1562 -/bin/bash pts/0 root 1562 1567 1562 \_ ps fo tty,ruser,ppid,pid,sess,cmd

Conclusion and Note

As the example above has shown, run0 can generally be used as a simple sudo alternative and offers some security-relevant advantages. If run0 prevails over sudo, this will not happen within the next year. Some distributions simply lack a sufficiently up-to-date systemd version. In addition, the configuration of PolKit is not one of the daily tasks for some admins and know-how must first be built up here in order to transfer any existing sudo “constructs”.

In addition, a decisive advantage of run0 should not be ignored: By default, it colors the background red! 😉

If you had the choice, would you rather take Salsa or Guacamole? Let me explain, why you should choose Guacamole over Salsa.

In this blog article, we want to take a look at one of the smaller Apache projects out there called Apache Guacamole. Apache Guacamole allows administrators to run a web based client tool for accessing remote applications and servers. This can include remote desktop systems, applications or terminal sessions. Users can simply access them by using their web browsers. No special client or other tools are required. From there, they can login and access all pre-configured remote connections that have been specified by an administrator.

Thereby, Guacamole supports a wide variety of protocols like VNC, RDP, and SSH. This way, users can basically access anything from remote terminal sessions to full fledged Graphical User Interfaces provided by operation systems like Debian, Ubuntu, Windows and many more.

Convert every window application to a web application

If we spin this idea further, technically every window application that isn’t designed to run as an web application can be transformed to a web application by using Apache Guacamole. We helped a customer to bring its legacy application to Kubernetes, so that other users could use their web browsers to run it. Sure, implementing the application from ground up, so that it follows the Cloud Native principles, is the preferred solution. As always though, efforts, experience and costs may exceed the available time and budget and in that cases, Apache Guacamole can provide a relatively easy way for realizing such projects.

In this blog article, I want to show you, how easy it is to run a legacy window application as a web app on Kubernetes. For this, we will use a Kubernetes cluster created by kind and create a Kubernetes Deployment to make kate – a KDE based text editor – our own web application. It’s just an example, so there might be better application to transform but this one should be fine to show you the concepts behind Apache Guacamole.

So, without further ado, let’s create our kate web application.

Preparation of Kubernetes

Before we can start, we must make sure that we have a Kubernetes cluster, that we can test on. If you already have a cluster, simply skip this section. If not, let’s spin one up by using kind.

kind is a lightweight implementation of Kubernetes that can be run on every machine. It’s written in Go and can be installed like this:

# For AMD64 / x86_64

[ $(uname -m) = x86_64 ] && curl -Lo ./kind https://kind.sigs.k8s.io/dl/v0.22.0/kind-linux-amd64

# For ARM64

[ $(uname -m) = aarch64 ] && curl -Lo ./kind https://kind.sigs.k8s.io/dl/v0.22.0/kind-linux-arm64

chmod +x ./kind

sudo mv ./kind /usr/local/bin/kind

Next, we need to install some dependencies for our cluster. This includes for example docker and kubectl.

$ sudo apt install docker.io kubernetes-client

By creating our Kubernetes Cluster with kind, we need docker because the Kubernetes cluster is running within Docker containers on your host machine. Installing kubectl allows us to access the Kubernetes after creating it.

Once we installed those packages, we can start to create our cluster now. First, we must define a cluster configuration. It defines which ports are accessible from our host machine, so that we can access our Guacamole application. Remember, the cluster itself is operated within Docker containers, so we must ensure that we can access it from our machine. For this, we define the following configuration which we save in a file called cluster.yaml:

kind: Cluster

apiVersion: kind.x-k8s.io/v1alpha4

nodes:

- role: control-plane

extraPortMappings:

- containerPort: 30000

hostPort: 30000

listenAddress: "127.0.0.1"

protocol: TCP

Hereby, we basically map the container’s port 30000 to our local machine’s port 30000, so that we can easily access it later on. Keep this in mind because it will be the port that we will use with our web browser to access our kate instance.

Ultimately, this configuration is consumed by kind . With it, you can also adjust multiple other parameters of your cluster besides of just modifying the port configuration which are not mentioned here. It’s worth to take a look kate’s documentation for this.

As soon as you saved the configuration to cluster.yaml, we can now start to create our cluster:

$ sudo kind create cluster --name guacamole --config cluster.yaml

Creating cluster "guacamole" ...

✓ Ensuring node image (kindest/node:v1.29.2) 🖼

✓ Preparing nodes 📦

✓ Writing configuration 📜

✓ Starting control-plane 🕹️

✓ Installing CNI 🔌

✓ Installing StorageClass 💾

Set kubectl context to "kind-guacamole"

You can now use your cluster with:

kubectl cluster-info --context kind-guacamole

Have a question, bug, or feature request? Let us know! https://kind.sigs.k8s.io/#community 🙂

Since we don’t want to run everything in root context, let’s export the kubeconfig, so that we can use it with kubectl by using our unpriviledged user:

$ sudo kind export kubeconfig \

--name guacamole \

--kubeconfig $PWD/config

$ export KUBECONFIG=$PWD/config

$ sudo chown $(logname): $KUBECONFIG

By doing so, we are ready and can access our Kubernetes cluster using kubectl now. This is our baseline to start migrating our application.

Creation of the Guacamole Deployment

In order to run our application on Kubernetes, we need some sort of workload resource. Typically, you could create a Pod, Deployment, Statefulset or Daemonset to run workloads on a cluster.

Let’s create the Kubernetes Deployment for our own application. The example shown below shows the deployment’s general structure. Each container definition will have their dedicated examples afterwards to explain them in more detail.

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

app: web-based-kate

name: web-based-kate

spec:

replicas: 1

selector:

matchLabels:

app: web-based-kate

template:

metadata:

labels:

app: web-based-kate

spec:

containers:

# The guacamole server component that each

# user will connect to via their browser

- name: guacamole-server

image: docker.io/guacamole/guacamole:1.5.4

...

# The daemon that opens the connection to the

# remote entity

- name: guacamole-guacd

image: docker.io/guacamole/guacd:1.5.4

...

# Our own self written application that we

# want to make accessible via the web.

- name: web-based-kate

image: registry.example.com/own-app/web-based-kate:0.0.1

...

volumes:

- name: guacamole-config

secret:

secretName: guacamole-config

- name: guacamole-server

emptyDir: {}

- name: web-based-kate-home

emptyDir: {}

- name: web-based-kate-tmp

emptyDir: {}

As you can see, we need three containers and some volumes for our application. The first two containers are dedicated to Apache Guacamole itself. First, it’s the server component which is the external endpoint for clients to access our web application. It serves the web server as well as the user management and configuration to run Apache Guacamole.

Next to this, there is the guacd daemon. This is the core component of Guacamole which creates the remote connections to the application based on the configuration done to the server. This daemon forwards the remote connection to the clients by making it accessible to the Guacamole server which then forwards the connection to the end user.

Finally, we have our own application. It will offer a connection endpoint to the guacd daemon using one of Guacamole’s supported protocols and provide the Graphical User Interface (GUI).

Guacamole Server

Now, let’s deep dive into each container specification. We are starting with the Guacamole server instance. This one handles the session and user management and contains the configuration which defines what remote connections are available and what are not.

- name: guacamole-server

image: docker.io/guacamole/guacamole:1.5.4

env:

- name: GUACD_HOSTNAME

value: "localhost"

- name: GUACD_PORT

value: "4822"

- name: GUACAMOLE_HOME

value: "/data/guacamole/settings"

- name: HOME

value: "/data/guacamole"

- name: WEBAPP_CONTEXT

value: ROOT

volumeMounts:

- name: guacamole-config

mountPath: /data/guacamole/settings

- name: guacamole-server

mountPath: /data/guacamole

ports:

- name: http

containerPort: 8080

securityContext:

allowPrivilegeEscalation: false

privileged: false

readOnlyRootFilesystem: true

capabilities:

drop: ["all"]

resources:

limits:

cpu: "250m"

memory: "256Mi"

requests:

cpu: "250m"

memory: "256Mi"

Since it needs to connect to the guacd daemon, we have to provide the connection information for guacd by passing them into the container using environment variables like GUACD_HOSTNAME or GUACD_PORT. In addition, Guacamole would usually be accessible via http://<your domain>/guacamole.

This behavior however can be adjusted by modifying the WEBAPP_CONTEXT environment variable. In our case for example, we don’t want a user to type in /guacamole to access it but simply using it like this http://<your domain>/

Guacamole Guacd

Then, there is the guacd daemon.

- name: guacamole-guacd

image: docker.io/guacamole/guacd:1.5.4

args:

- /bin/sh

- -c

- /opt/guacamole/sbin/guacd -b 127.0.0.1 -L $GUACD_LOG_LEVEL -f

securityContext:

allowPrivilegeEscalation: true

privileged: false

readOnlyRootFileSystem: true

capabilities:

drop: ["all"]

resources:

limits:

cpu: "250m"

memory: "512Mi"

requests:

cpu: "250m"

memory: "512Mi"

It’s worth mentioning that you should modify the arguments used to start the guacd container. In the example above, we want guacd to only listen to localhost for security reasons. All containers within the same pod share the same network namespace. As a a result, they can access each other via localhost. This said, there is no need to make this service accessible to over services running outside of this pod, so we can limit it to localhost only. To achieve this, you would need to set the -b 127.0.0.1 parameter which sets the corresponding listen address. Since you need to overwrite the whole command, don’t forget to also specify the -L and -f parameter. The first parameter sets the log level and the second one set the process in the foreground.

Web Based Kate

To finish everything off, we have the kate application which we want to transform to a web application.

- name: web-based-kate

image: registry.example.com/own-app/web-based-kate:0.0.1

env:

- name: VNC_SERVER_PORT

value: "5900"

- name: VNC_RESOLUTION_WIDTH

value: "1280"

- name: VNC_RESOLUTION_HEIGHT

value: "720"

securityContext:

allowPrivilegeEscalation: true

privileged: false

readOnlyRootFileSystem: true

capabilities:

drop: ["all"]

volumeMounts:

- name: web-based-kate-home

mountPath: /home/kate

- name: web-based-kate-tmp

mountPath: /tmp

Configuration of our Guacamole setup

After having the deployment in place, we need to prepare the configuration for our Guacamole setup. In order to know, what users exist and which connections should be offered, we need to provide a mapping configuration to Guacamole.

In this example, a simple user mapping is shown for demonstration purposes. It uses a static mapping defined in a XML file that is handed over to the Guacamole server. Typically, you would use other authentication methods instead like a database or LDAP.

This said however, let’s continue with our static one. For this, we simply define a Kubernetes Secret which is mounted into the Guacamole server. Hereby, it defines two configuration files. One is the so called guacamole.properties. This is Guacamole’s main configuration file. Next to this, we also define the user-mapping.xml which contains all available users and their connections.

apiVersion: v1

kind: Secret

metadata:

name: guacamole-config

stringData:

guacamole.properties: |

enable-environment-properties: true

user-mapping.xml: |

<user-mapping>

<authorize username="admin" password="PASSWORD" encoding="sha256">

<connection name="web-based-kate">

<protocol>vnc</protocol>

<param name="hostname">localhost</param>

<param name="port">5900</param>

</connection>

</authorize>

</user-mapping>

As you can see, we only defined on specific user called admin which can use a connection called web-based-kate. In order to access the kate instance, Guacamole would use VNC as the configured protocol. To make this happen, our web application must offer a VNC Server port on the other side, so that the guacd daemon can then access it to forward the remote session to clients. Keep in mind that you need to replace the string PASSWORD to a proper sha256 sum which contains the password. The sha256 sum could look like this for example:

$ echo -n "test" | sha256sum

9f86d081884c7d659a2feaa0c55ad015a3bf4f1b2b0b822cd15d6c15b0f00a08 -

Next, the hostname parameter is referencing the corresponding VNC server of our kate container. Since we are starting our container alongside with our Guacamole containers within the same pod, the Guacamole Server as well as the guacd daemon can access this application via localhost. There is no need to set up a Kubernetes Service in front of it since only guacd will access the VNC server and forward the remote session via HTTP to clients accessing Guacamole via their web browsers. Finally, we also need to specify the VNC server port which is typically 5900 but this could be adjusted if needed.

The corresponding guacamole.properties is quite short. By enabling the enabling-environment-properties configuration parameter, we make sure that every Guacamole configuration parameter can also be set via environment variables. This way, we don’t need to modify this configuration file each and every time when we want to adjust the configuration but we only need to provide updated environment variables to the Guacamole server container.

Make Guacamole accessible

Last but not least, we must make the Guacamole server accessible for clients. Although each provided service can access each other via localhost, the same does not apply to clients trying to access Guacamole. Therefore, we must make Guacamole’s server port 8080 available to the outside world. This can be achieved by creating a Kubernetes Service of type NodePort. This service is forwarding each request from a local node port to the corresponding container that is offering the configured target port. In our case, this would be the Guacamole server container which is offering port 8080.

apiVersion: v1

kind: Service

metadata:

name: web-based-kate

spec:

type: NodePort

selector:

app: web-based-kate

ports:

- name: http

protocol: TCP

port: 8080

targetPort: 8080

nodePort: 30000

This specific port is then mapped to the Node’s 30000 port for which we also configured the kind cluster in such a way that it forwards its node port 30000 to the host system’s port 30000. This port is the one that we would need to use to access Guacamole with our web browsers.

Prepartion of the Application container

Before we can start to deploy our application, we need to prepare our kate container. For this, we simply create a Debian container that is running kate. Keep in mind that you would typically use lightweight base images like alpine to run applications like this. For this demonstration however, we use the Debian images since it is easier to spin it up but in general you only need a small friction of the functionality that is provided by this base image. Moreover – from an security point of view – you want to keep your images small to minimize the attack surface and make sure it is easier to maintain. For now however, we will continue with the Debian image.

In the example below, you can see a Dockerfile for the kate container.

FROM debian:12

# Install all required packages

RUN apt update && \

apt install -y x11vnc xvfb kate

# Add user for kate

RUN adduser kate --system --home /home/kate -uid 999

# Copy our entrypoint in the container

COPY entrypoint.sh /opt

USER 999

ENTRYPOINT [ "/opt/entrypoint.sh" ]

Here you see that we create a dedicated user called kate (User ID 999) for which we also create a home directory. This home directory is used for all files that kate is creating during runtime. Since we set the readOnlyRootFilesystem to true, we must make sure that we mount some sort of writable volume (e.g EmptyDir) to kate’s home directory. Otherwise, kate wouldn’t be able to write any runtime data then.

Moreover, we have to install the following three packages:

- x11vnc

- xvfb

- kate

These are the only packages we need for our container. In addition, we also need to create an entrypoint script to start the application and prepare the container accordingly. This entrypoint script creates the configuration for kate, starts it in a virtual display by using xvfb-run and provides this virtual display to end users by using the VNC server via x11vnc. In the meantime, xdrrinfo is used to check if the virtual display came up successfully after starting kate. If it takes to long, the entrypoint script will fail by returning the exit code 1.

By doing this, we ensure that the container is not stuck in an infinite loop during a failure and let Kubernetes restart the container whenever it couldn’t start the application successfully. Furthermore, it is important to check if the virtual display came up prior of handing it over to the VNC server because the VNC server would crash if the virtual display is not up and running since it needs something to share. On the other hand though, our container will be killed whenever kate is terminated because it would also terminate the virtual display and in the end it would then also terminate the VNC server which let’s the container exit, too. This way, we don’t need take care of it by our own.

#!/bin/bash

set -e

# If no resolution is provided

if [ -z $VNC_RESOLUTION_WIDTH ]; then

VNC_RESOLUTION_WIDTH=1920

fi

if [ -z $VNC_RESOLUTION_HEIGHT ]; then

VNC_RESOLUTION_HEIGHT=1080

fi

# If no server port is provided

if [ -z $VNC_SERVER_PORT ]; then

VNC_SERVER_PORT=5900

fi

# Prepare configuration for kate

mkdir -p $HOME/.local/share/kate

echo "[MainWindow0]

"$VNC_RESOLUTION_WIDTH"x"$VNC_RESOLUTION_HEIGHT" screen: Height=$VNC_RESOLUTION_HEIGHT

"$VNC_RESOLUTION_WIDTH"x"$VNC_RESOLUTION_HEIGHT" screen: Width=$VNC_RESOLUTION_WIDTH

"$VNC_RESOLUTION_WIDTH"x"$VNC_RESOLUTION_HEIGHT" screen: XPosition=0

"$VNC_RESOLUTION_WIDTH"x"$VNC_RESOLUTION_HEIGHT" screen: YPosition=0

Active ViewSpace=0

Kate-MDI-Sidebar-Visible=false" > $HOME/.local/share/kate/anonymous.katesession

# We need to define an XAuthority file

export XAUTHORITY=$HOME/.Xauthority

# Define execution command

APPLICATION_CMD="kate"

# Let's start our application in a virtual display

xvfb-run \

-n 99 \

-s ':99 -screen 0 '$VNC_RESOLUTION_WIDTH'x'$VNC_RESOLUTION_HEIGHT'x16' \

-f $XAUTHORITY \

$APPLICATION_CMD &

# Let's wait until the virtual display is initalize before

# we proceed. But don't wait infinitely.

TIMEOUT=10

while ! (xdriinfo -display :99 nscreens); do

sleep 1

let TIMEOUT-=1

done

# Now, let's make the virtual display accessible by

# exposing it via the VNC Server that is listening on

# localhost and the specified port (e.g. 5900)

x11vnc \

-display :99 \

-nopw \

-localhost \

-rfbport $VNC_SERVER_PORT \

-forever

After preparing those files, we can now create our image and import it to our Kubernetes cluster by using the following commands:

# Do not forget to give your entrypoint script

# the proper permissions do be executed

$ chmod +x entrypoint.sh

# Next, build the image and import it into kind,

# so that it can be used from within the clusters.

$ sudo docker build -t registry.example.com/own-app/web-based-kate:0.0.1 .

$ sudo kind load -n guacamole docker-image registry.example.com/own-app/web-based-kate:0.0.1

The image will be imported to kind, so that every workload resource operated in our kind cluster can access it. If you use some other Kubernetes cluster, you would need to upload this to a registry that your cluster can pull images from.

Finally, we can also apply our previously created Kubernetes manifests to the cluster. Let’s say we saved everything to one file called kuberentes.yaml. Then, you can simply apply it like this:

$ kubectl apply -f kubernetes.yaml

deployment.apps/web-based-kate configured

secret/guacamole-config configured

service/web-based-kate unchanged

This way, a Kubernetes Deployment, Secret and Service is created which ultimately creates a Kubernetes Pod which we can access afterwards.

$ kubectl get pod

NAME READY STATUS RESTARTS AGE

web-based-kate-7894778fb6-qwp4z 3/3 Running 0 10m

Verification of our Deployment

Now, it’s money time! After preparing everything, we should be able to access our web based kate application by using our web browser. As mentioned earlier, we configured kind in such a way that we can access our application by using our local port 30000. Every request to this port is forwarded to the kind control plane node from where it is picked up by the Kubernetes Service of type NodePort. This one is then forwarding all requests to our designated Guacamole server container which is offering the web server for accessing remote application’s via Guacamole.

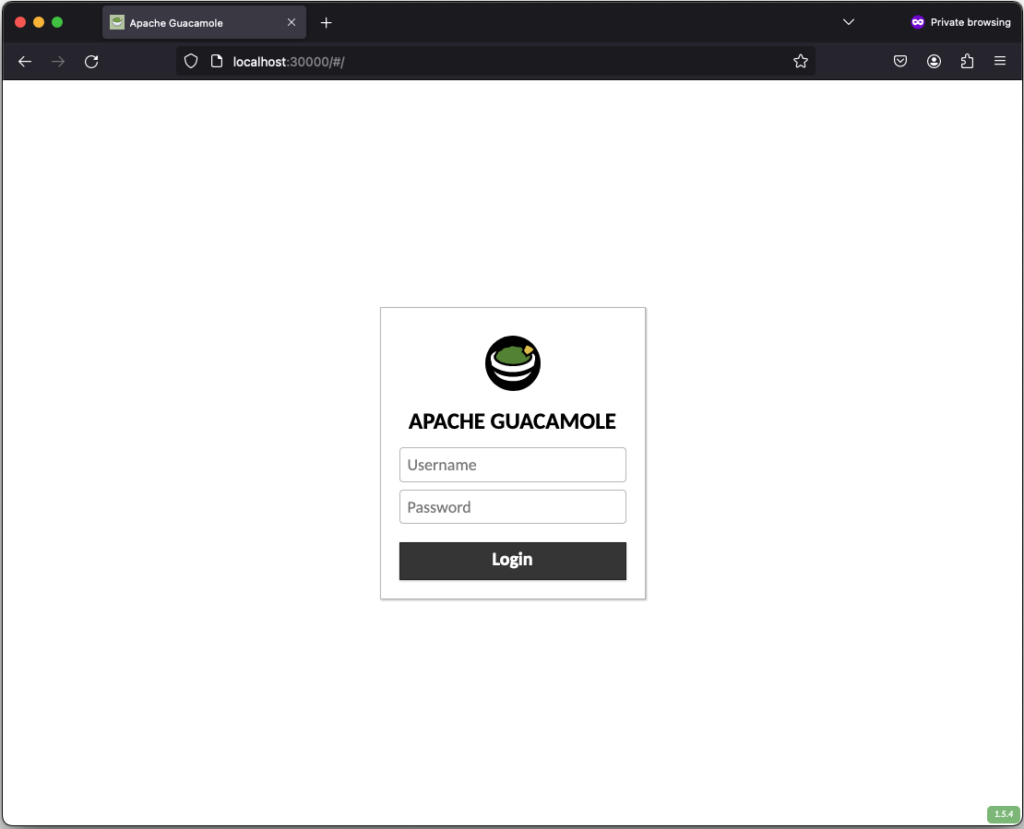

If everything works out, you should be able to see the the following login screen:

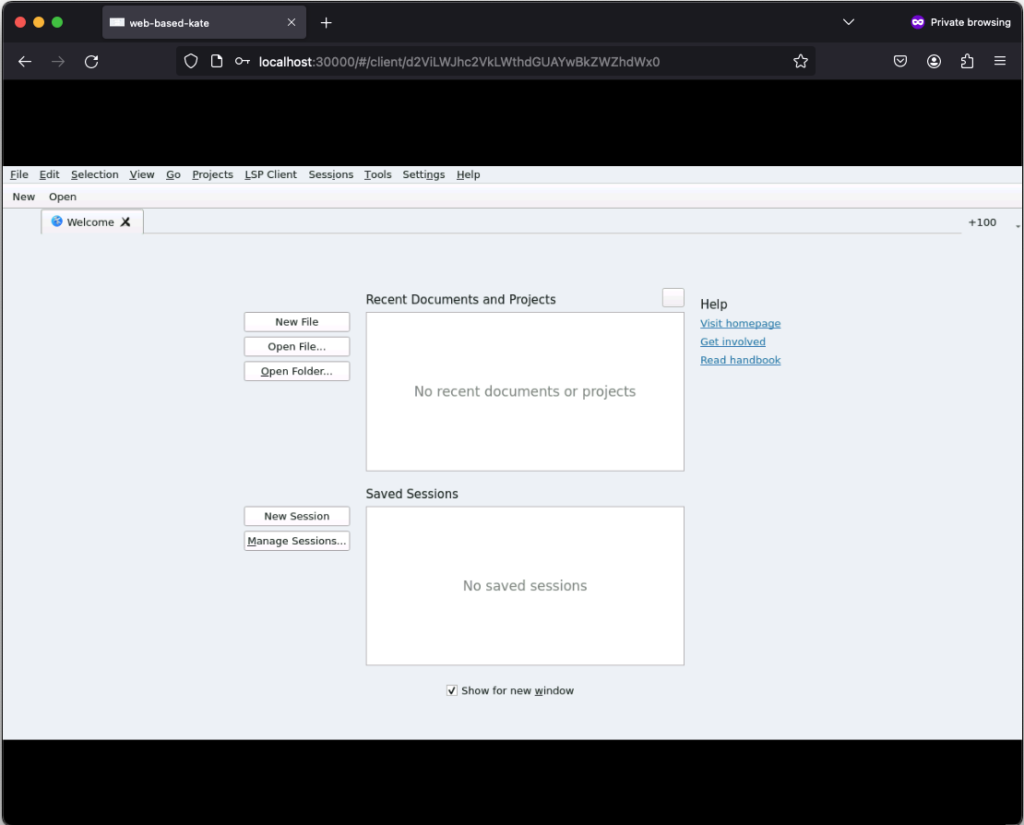

After successfully login in, the remote connection is established and you should be able to see the welcome screen from kate:

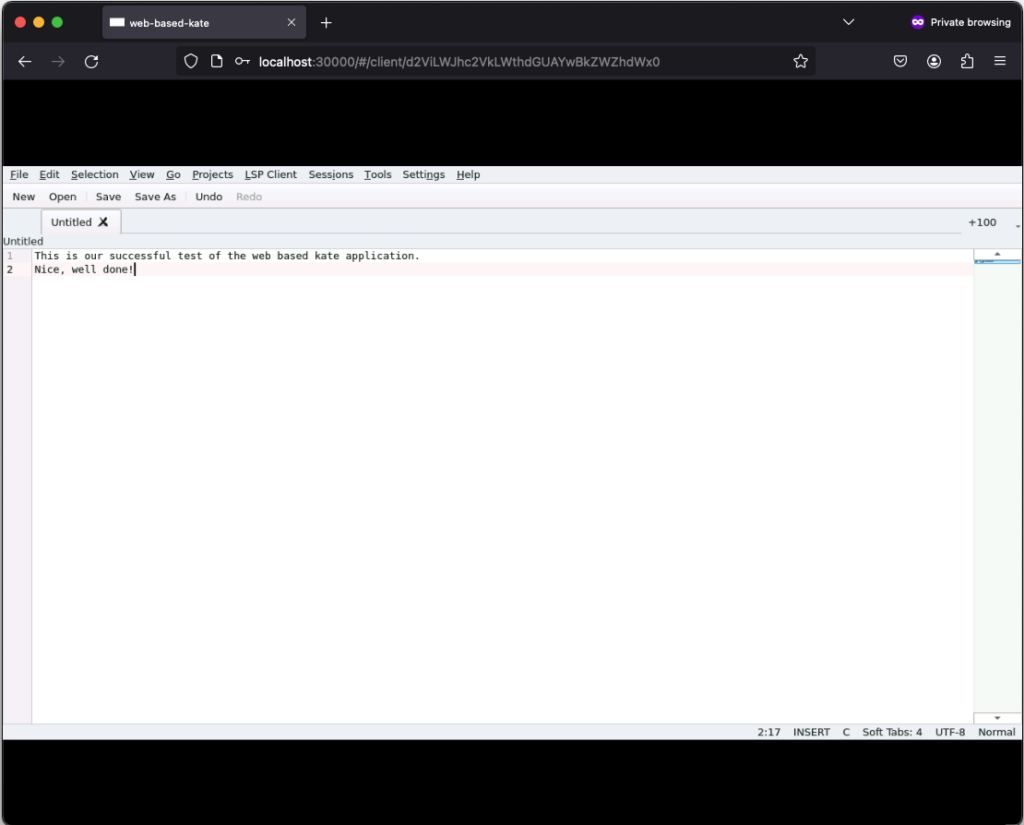

If you click on New, you can create a new text file:

Those text files can even be saved but keep in mind that they will only exist as long as our Kubernetes Pod exists. Once it gets deleted, the corresponding EmptyDir, that we mounted into our kate container, gets deleted as well and all files in it are lost. Moreover, the container is set to read-only meaning that a user can only write files to the volumes (e.g. EmptyDir) that we mounted to our container.

Conclusion

After seeing that it’s relatively easy to convert every application to a web based one by using Apache Guacamole, there is only one major question left…

What do you prefer the most. Salsa or Guacamole?

Integrating Proxmox Backup Server into Proxmox Clusters

Proxmox Backup Server

In today’s digital landscape, where data reigns supreme, ensuring its security and integrity is paramount for businesses of all sizes. Enter Proxmox Backup Server, a robust solution poised to revolutionize data protection strategies with its unparalleled features and open-source nature.

At its core, Proxmox Backup Server is a comprehensive backup solution designed to safeguard critical data and applications effortlessly in virtualized environments based on Proxmox VE. Unlike traditional backup methods, Proxmox Backup Server offers a streamlined approach, simplifying the complexities associated with data backup and recovery.

One of the standout features of Proxmox Backup Server is its seamless integration with Proxmox Virtual Environment (PVE), creating a cohesive ecosystem for managing virtualized environments. This integration allows for efficient backup and restoration of Linux containers and virtual machines, ensuring minimal downtime and maximum productivity. Without the need of any backup clients on each container or virtual machine, this solution still offers the back up and restore the entire system but also single files directly from the filesystem.

Proxmox Backup Server provides a user friendly interface, making it accessible to both seasoned IT professionals and newcomers alike. With its intuitive design, users can easily configure backup tasks, monitor progress, and retrieve data with just a few clicks, eliminating the need for extensive training or technical expertise.

Data security is a top priority for businesses across industries and Proxmox Backup Server delivers on this front. Bundled with solutions like ZFS it also brings in all the enterprise filesystem features like encryption at rest, encryption at transition, checksums, snapshots, deduplication and compression but also integrating iSCSI or NFS storage from enterprise storage solutions like from NetApp can be used.

Another notable aspect of Proxmox Backup Server is its cost effectiveness. As an open-source solution, it eliminates the financial barriers (also in addition with the Proxmox VE solutions) associated with proprietary backup software.

Integrating Proxmox Backup Server into Proxmox Clusters

General

This guide expects you to have already at least one Proxmox VE system up and running and also a system where a basic installation of Proxmox Backup Server has been performed. Within this example, the Proxmox Backup Server is installed on a single disk, where the datastore gets attached to an additional block device holding the backups. Proxmox VE and Proxmox Backup Server instances must not be in the same network but must be reachable for each other. The integration requires administrative access to the datacenter of the Proxmox VE instance(s) and the Backup Server.

Prerequisites

- Proxmox VE (including the datacenter).

- Proxmox Backup Server (basic installation).

- Administrative access to all systems.

- Network reachability.

- Storage device holding the backups (in this case a dedicated block storage device).

Administration: Proxmox Backup Server

Like the Proxmox VE environment, the Proxmox Backup Server comes along with a very intuitive web frontend. Unlike the web frontend of Proxmox VE, which runs on tcp/8006, the Proxmox Backup Server can be reached on tcp/8007. Therefore, all next tasks will be done on https://<IP-PROXMOX-BACKUP-SERVER>:8007.

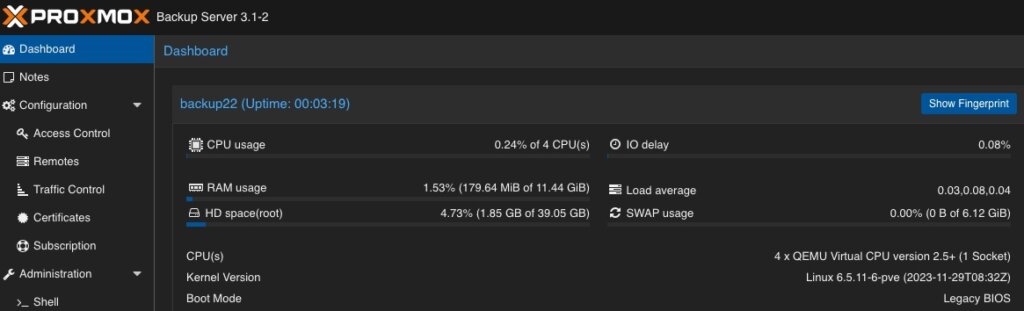

After logging in to the web frontend, the dashboard overview welcomes the user.

Adding Datastore / Managing Storage

The initial and major tasks relies in managing the storage and adding a usable datastore for the virtualization environment holding the backup data. Therefore, we switch to the Administration chapter and click on Storage / Disks. This provides an overview of the available Devices on the Proxmox Backup Server.  As already being said, this example uses a dedicated block storage device which will be used with ZFS to benefit from checksums, deduplication, compression which of course can also be used in addition with multiple disks (so called raidz-levels) or with other solutions like folder or NFS shares. Coming back to our example, we can see the empty /dev/sdb device which will be used to store all backup files.

As already being said, this example uses a dedicated block storage device which will be used with ZFS to benefit from checksums, deduplication, compression which of course can also be used in addition with multiple disks (so called raidz-levels) or with other solutions like folder or NFS shares. Coming back to our example, we can see the empty /dev/sdb device which will be used to store all backup files.

By clicking on ZFS in the top menu bar, a ZFS trunk can be created as a datastore. Within this survey, a name, the raid level, compression and the devices to use must be defined.  As already mentioned, we can attach multiple disks and define a desired raid level. The given example only consists of a single disk, which will be defined here. Compression is optional, but using LZ4 as a compression is recommended. As a lossless data compression algorithm, LZ4 aims to provide a good trade off between speed and compression ratio which is very transparent on today’s system.

As already mentioned, we can attach multiple disks and define a desired raid level. The given example only consists of a single disk, which will be defined here. Compression is optional, but using LZ4 as a compression is recommended. As a lossless data compression algorithm, LZ4 aims to provide a good trade off between speed and compression ratio which is very transparent on today’s system.

Ensure to check Add as Datastore option (default) will create the given name directly as a usable datastore. In our example this will be backup01.

Keep in mind, that this part is not needed when using a NFS share. Also do not use this in addition with hardware RAID controllers.

Adding User for Backup

In a next step, a dedicated user will be created that will be used for the datastore permissions and for the Proxmox VE instances for authentication and authorization. This allows even complex setups with different datastores, different users including different access levels (e.g., reading, writing, auditing,…) on different clusters and instances. To keep it simple for demonstrations, just a single user for everything will be used.

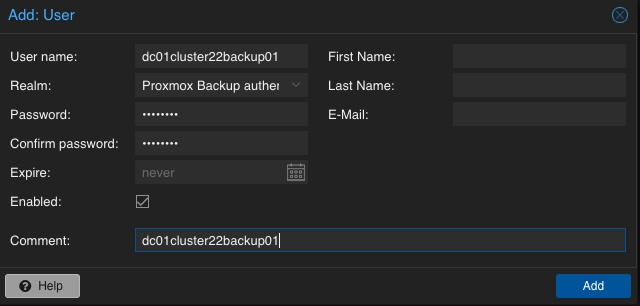

A new user is configured by selecting Configuration, Access Control and User Management in the left menu. There, a new user can be created by simply defining a name and a password. The default realm should stay on the default for the Proxmox Backup authentication provider. Depending on the complexity of the used name schema, you may also create reasonable users. In the given example, the user is called dc01cluster22backup01.

A new user is configured by selecting Configuration, Access Control and User Management in the left menu. There, a new user can be created by simply defining a name and a password. The default realm should stay on the default for the Proxmox Backup authentication provider. Depending on the complexity of the used name schema, you may also create reasonable users. In the given example, the user is called dc01cluster22backup01.

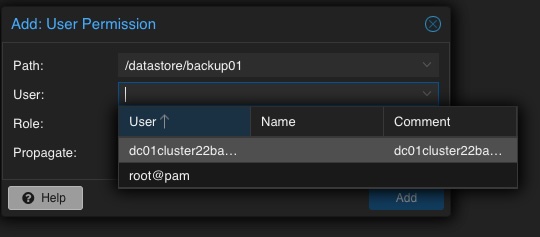

Adding Permission of User for Datastore

Mentioning already the possibility to create complex setups regarding authentication and authorization, the datastore must be linked to at least a single user that can access it. Therefore, we go back to the Datastore and select the previously created backup01 datastore. In the top menu bar, the permissions can be created and adjusted in the Permissions chapter. Initially, a new one will be created now. Within the following survey the datastore or path, the user and the role must be defined:

Path: /datastore/backup01

User: dc01cluster22backup01@pbs

Role: DatastoreAdmin

Propagate: True

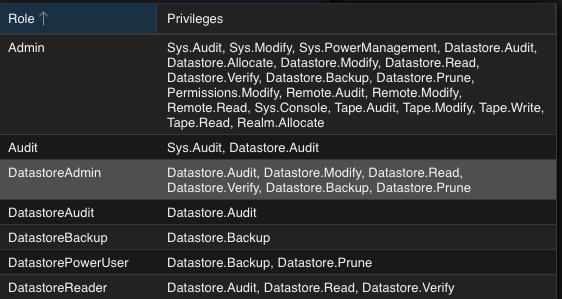

To provide a short overview of the possible roles, this will be shortly mentioned without any further explanation:

- Admin

- Audit

- DatastoreAdmin

- DatastoreAudit

- DatastoreBackup

- DatastorePowerUser

- DatastoreReader

Administration: Proxmox VE

The integration of the backup datastore will be performed from the Proxmox VE instances via the Datacenter. As a result, the Proxmox VE web frontend will now be used for further administrative actions. The Proxmox VE web frontend runs on tcp/8006, Therefore, all next tasks will be done on https://<IP-PROXMOX-VE-SERVER>:8006.

Adding Storage

Integrating the Proxmox Backup Server works the same way like managing and adding a shared storage to a Proxmox datacenter.

Integrating the Proxmox Backup Server works the same way like managing and adding a shared storage to a Proxmox datacenter.

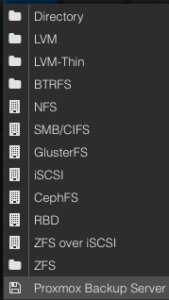

In the left menu we choose the active datacenter and select the Storage options. There, we can find all natively support storage options like (NFS, SMB/CIFS, iSCSI, ZFS, GlusterFS,…) of Proxmox and finally select the Proxmox Backup Server as a dedicated item.

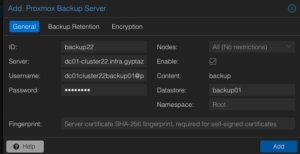

Afterwards, the details for adding this datastore to the datacenter must be inserted. The following options need to be defined:

ID: backup22

ID: backup22

Server: <FQDN-OR-IP-OF-BACKUP-SERVER>

Username: dc01cluster22backup01@pbs

Password: <THE-PASSWORD-OF-THE-USER>

Enable: True

Datastore: backup01

Fingerprint: <SYSTEM-FINGERPRINT-OF-BACKUP-SERVER>

Optionally, also the Backup Retention and Encryption can be configured before adding the new backup datastore. While the backup retention can also be configured on the Proxmox Backup Server (which is recommended), enabling the encryption should be considered. Selecting an d activating the encryption is easily done by simply setting it to Auto-generate a client encryption key. Depending on your previous setup, also an already present key can be uploaded and used.

Optionally, also the Backup Retention and Encryption can be configured before adding the new backup datastore. While the backup retention can also be configured on the Proxmox Backup Server (which is recommended), enabling the encryption should be considered. Selecting an d activating the encryption is easily done by simply setting it to Auto-generate a client encryption key. Depending on your previous setup, also an already present key can be uploaded and used.

After adding this backup datastore to the datacenter, this can immediately be used for backup and the integration is finalized.

Conclusion

Proxmox provides with the Proxmox Backup Server an enterprise backup solution, for backing up Linux containers and virtual machines. Supporting features like incremental and fully deduplicated backups by using the benefits of different open-source solutions, in addition with strong encryption and data integrity this solution is a prove that open-source software can compete with closed-source enterprise software. Together with Proxmox VE, enterprise like virtualization environments can be created and managed without missing the typical enterprise feature set. Proxmox VE and the Proxmox Backup Server can also be used in addition to storage appliances from vendors like NetApp, by directly use iSCSI or NFS.

Providing this simple example, there are of course much more complex scenarios which can be created and also should be considered. We are happy to provide you more information and to assist you creating such setups. We also provide help for migrating from other products to Proxmox VE setups. Feel free to contact us at any time for more information.

Migrating VMs from VMware ESXi to Proxmox

In response to Broadcom’s recent alterations in VMware’s subscription model, an increasing number of enterprises are reevaluating their virtualization strategies. With heightened concerns over licensing costs and accessibility to features, businesses are turning towards open source solutions for greater flexibility and cost-effectiveness. Proxmox VE, in particular, has garnered significant attention as a viable alternative. Renowned for its robust feature set and open architecture, Proxmox offers a compelling platform for organizations seeking to mitigate the impact of proprietary licensing models while retaining comprehensive virtualization capabilities. This trend underscores a broader industry shift towards embracing open-source technologies as viable alternatives in the virtualization landscape. Just to mention, Proxmox is widely known as a viable alternative to VMware ESXi but there are also other options available, such as bhyve which we also covered in one of our blog posts.

Benefits of Opensource Solutions

In the dynamic landscape of modern business, the choice to adopt open source solutions for virtualization presents a strategic advantage for enterprises. With platforms like KVM, Xen and even LXC containers, organizations can capitalize on the absence of license fees, unlocking significant cost savings and redirecting resources towards innovation and growth. This financial flexibility empowers companies to make strategic investments in their IT infrastructure without the burden of proprietary licensing costs. Moreover, open source virtualization promotes collaboration and transparency, allowing businesses to tailor their environments to suit their unique needs and seamlessly integrate with existing systems. Through community-driven development and robust support networks, enterprises gain access to a wealth of expertise and resources, ensuring the reliability, security, and scalability of their virtualized infrastructure. Embracing open source virtualization not only delivers tangible financial benefits but also equips organizations with the agility and adaptability needed to thrive in an ever-evolving digital landscape.

Migrating a VM

Prerequisites

To ensure a smooth migration process from VMware ESXi to Proxmox, several key steps must be taken. First, SSH access must be enabled on both the VMware ESXi host and the Proxmox host, allowing for remote management and administration. Additionally, it’s crucial to have access to both systems, facilitating the migration process. Furthermore, establishing SSH connectivity between VMware ESXi and Proxmox is essential for seamless communication between the two platforms. This ensures efficient data transfer and management during migration. Moreover, it’s imperative to configure the Proxmox VE system or cluster in a manner similar to the ESXi setup, especially concerning networking configurations. This includes ensuring compatibility with VLANs or VXLANs for more complex setups. Additionally, both systems should either run on local storage or have access to shared storage, such as NFS, to facilitate the transfer of virtual machine data. Lastly, before initiating the migration, it’s essential to verify that the Proxmox system has sufficient available space to accommodate the imported virtual machine, ensuring a successful transition without storage constraints.

Activate SSH on ESXi

The SSH server must be activated in order to copy the content from the ESXi system to the new location on the Proxmox server. The virtual machine will later be copied from the Proxmox server. Therefore, it is necessary that the Proxmox system can establish an SSH connection on tcp/22 to the ESXi system:

- Log in to the VMware ESXi host.

- Navigate to Configuration > Security Profile.

- Enable SSH under Services.

Find Source Information about VM on ESXi

One of the challenging matters in finding the location of the virtual machine holding the virtual machine disk. The path can be found within the web UI of the ESXi system:

- Locate the ESXi node that runs the Virtual Machine that should be migrated

- Identify the virtual machine to be migrated (e.g., pgsql07.gyptazy.ch).

- Obtain the location of the virtual disk (VMDK) associated with the VM from the configuration panel.

- The VM location path should be shown (e.g., /vmfs/volumes/137b4261-68e88bae-0000-000000000000/pgsql07.gyptazy.ch).

- Stop and shutdown the VM.

Create a New Empty VM on Proxmox

- Create a new empty VM in Proxmox.

- Assign the same resources like in the ESXi setup.

- Set the network type to VMware vmxnet3.

- Ensure the needed network resources (e.g., VLAN, VXLAN) are properly configured.

- Set the SCSCI controller for the disk to VMware PVSCSI.

- Do not create a new disk (this will be imported later from the ESXi source).

- Each VM gets an ID assigned by Proxmox (note it down, it will be needed later).

Copy VM from ESXi to Proxmox

The content of the virtual machine (VM) will be transferred from the ESXi to the Proxmox system using the open source tool rsync for efficient synchronization and copying. Therefore, the following commands need to be executed from the Proxmox system, where we create a temporary directory to store the VM’s content:

mkdir /tmp/migration_pgsql07.gyptazy.ch cd /tmp/migration_pgsql07.gyptazy.ch rsync -avP root@esx02-test.gyptazy.ch:/vmfs/volumes/137b4261-68e88bae-0000-000000000000/pgsq07.gyptazy.ch/* .

Depending on the file size of them virtual machine and the network connectivity this process may take some time.

Import VM in Proxmox

Afterwards, the disk is imported using the qm utility, defining the VM ID (which got created during the VM creation process), along with specifying the disk name (which has been copied over) and the destination data storage on the Proxmox system where the VM disk should be stored:

Afterwards, the disk is imported using the qm utility, defining the VM ID (which got created during the VM creation process), along with specifying the disk name (which has been copied over) and the destination data storage on the Proxmox system where the VM disk should be stored:

qm disk import 119 pgsql07.gyptazy.ch.vmdk local-lvm

Depending on the creation format of the VM or the exporting format there may be multiple disk files which may also be suffixed by _flat. This procedure needs to be repeated by all available disks.

Starting the VM

In the final step, all settings, resources, definitions and customizations of the system should be thoroughly reviewed. One validated, the VM can be launched, ensuring that all components are correctly configured for operation within the Proxmox environment.

Conclusion

This article only covers one of many possible methods for migrations in simple, standalone setups. In more complex environments involving multiple host nodes and different storage systems like fibre channel or network storage, there are significant differences and additional considerations. Additionally, there may be specific requirements regarding availability and Service Level Agreements (SLAs) to be concern. This may be very specific for each environment. Feel free to contact us for personalized guidance on your specific migration needs at any time. We are also pleased to offer our support in related areas in open source such as virtualization (e.g., OpenStack, VirtualBox) and topics pertaining to cloud migrations.

Addendum

On the 27th of March, Proxmox released their new import wizard (pve-esxi-import-tools) which makes migrations from VMware ESXi instances to a Proxmox environment much easier. Within an upcoming blog post we will provide more information about the new tooling and cases where this might be more useful but also covering the corner cases where the new import wizard cannot be used.

As described in the previous post, access control on Unix-like systems is traditionally based on the principle of Discretionary Access Control (DAC). Applications and services run under a specific user and group ID and are granted the corresponding access rights to files and folders.

AppArmor implements a Mandatory Access Control for Linux, based on the Linux Security Modules: an access control strategy that allows specific rights to be granted or denied to individual programs. This security layer exists in addition to the traditional DAC.

Since Debian 10 buster, AppArmor has been included and activated in the kernel by default. The packages apparmor and apparmor-utils provide tools for creating and maintaining AppArmor profiles.

Included Profiles

The two packages mentioned do not come with ready-made profiles, but only the Abstractions mentioned in the previous article: collections of rules that can be included in multiple profiles.

Some programs include their profiles in their own packages, while others contain profiles if corresponding modules are installed later – for example, mod_apparmor for the Apache web server.

The packages apparmor-profiles and apparmor-profiles-extra contain AppArmor profiles that can be found after installation in the directories /etc/apparmor.d (for tested profiles) and /usr/share/apparmor/extra-profiles (for experimental profiles), respectively. These profiles can be used as a basis for custom profiles.

Create Profiles Yourself

While at least experimental profiles are available for most common server services, such as the Apache web server, nothing can be found for the nginx web server. However, this is not a major issue, as a new AppArmor profile can be quickly created with the help of apparmor-utils.

Nginx Example

The following assumes a simple base installation of nginx that only serves HTML files under /var/www/html via HTTP. The focus here is primarily on the general approach, so repetitive steps will be skipped.

The described approach can be applied to any other program. To find out about the paths and files used by a program, dpkg can be used with the -L option, which lists all paths of a package. It should be noted that several packages may need to be queried for this; for nginx, the package of the same name provides hardly any useful information; this is only obtained with the nginx-common package:

# dpkg -L nginx-common

For the following steps, it is recommended to have two terminals open with root privileges.

Before the web server process can be observed for profile creation, all its running processes must be terminated:

# systemctl stop nginx

Once all processes are stopped, aa-genprof is called in the second terminal with the path of the web server’s program file:

# aa-genprof /usr/sbin/nginx

Some information about the current call of aa-genprof appears, including the hint Profiling: /usr/sbin/nginx, followed by Please start the application to be profiled in another window and exercise its functionality now.

To comply with this, the web server process is restarted in the first terminal window:

# systemctl start nginx

Before calling the S option in the second window to search the log files for AppArmor events, the web server should run for a few moments, and it should also be accessed from a browser so that as many typical activities of the process as possible are recorded.

Once this is done, the log files can be searched for events by pressing the S key:

[(S)can system log for AppArmor events] / (F)inish Reading log entries from /var/log/syslog. Updating AppArmor profiles in /etc/apparmor.d. Complain-mode changes:

If an event is found, the affected profile and the action recorded by AppArmor are displayed:

Profile: /usr/sbin/nginx Capability: dac_override Severity: 9 [1 - capability dac_override,] (A)llow / [(D)eny] / (I)gnore / Audi(t) / Abo(r)t / (F)inish

Here, the program /usr/sbin/nginx requests the Capability dac_override, which was already described in the last article. It is indispensable for the operation of the web server and is allowed by pressing A. Alternatively, the request can be denied with D or ignored with I. With the Audit option, this request would continue to be recorded in the log file during operation.

Profile: /usr/sbin/nginx Capability: net_bind_service Severity: 8 [1 - #include] 2 - capability net_bind_service,

The next event shows that the process requests the Capability net_bind_service, which is needed to open a port with a port number less than 1024.

Unlike the first query, there are two ways to allow access in the future: the first option involves integrating Abstractions for NIS, the Network Information Service. In this Abstraction, which can be found under /etc/apparmor.d/abstractions/nis, in addition to a rule that allows access to rule sets for NIS, the Capability net_bind_service is also listed.

However, since the HTTP server does not include NIS functionality, it is sufficient to only allow the Capability. By pressing 2 and A, this is adopted into the profile.

The same applies to the Abstractions proposed in the following steps for dovecot and postfix: here it is sufficient to only allow the Capabilities setgid and setuid.

Sometimes the designation of the Abstractions can be somewhat misleading: for example, the Abstraction nameservice contains, in addition to rules that allow read access to common nameservice files like passwd or hosts, also rules that permit network access. It is therefore always worthwhile to take a look at the respective file under /etc/apparmor.d/abstractions/ to see if including the Abstraction is beneficial.

After the web server process has received all necessary Capabilities, it apparently tries to open its error log file /var/log/nginx/log with write permissions. It is noticeable here that, in addition to the usual Allow, Deny, and Ignore, the options Glob and Glob with Extension have been added.